Human-Autonomy Interaction

Annual PlanVirtual Experimentation for Soldier Evaluation of Autonomous and Non-Autonomous Technologies Using Multi-User Immersive Gaming Environments

Project Team

Government

Mark Brudnak, Christopher Mikulski, Joseph Schneider, Ryan Wood, Ben Hayes, U.S. Army GVSC

Faculty

Jia Li, Shadi Alawneh, Osamah Rawashdeh, Oakland University

Industry

Jeremy Cooper, NTT Data

Student

Andrea Macklem-Zabel, Sean Dallas, Absalat Getachew, Motaz Abuhjileh, Mohammad Alzyout (Ph.D.), Solaf Athamnah, Fares Al-Shubeilat, Abed AlJundi, Yashwanth Naidu Tikkisetty, Jerry Liao, Cameron Lu (M.S.), Oakland University

Project Summary

Projects #2.A97 and #2.A98 began Q4 2022 and was completed Q3 2025.

Existing human-technology user studies primarily utilize physical prototypes of technology or occasionally utilize technologies with limited interaction capabilities that may not provide users with the necessary immersion and realism necessary to draw insights that predict future real-world user behaviors. Namely, current simulation technology lacks approaches for rapidly generating immersive and realistic human-technology interaction experiments with users and although there are some frameworks available, they remain unsuitable for military applications. It is also unclear within the literature what is the level of fidelity required in simulated human-technology interactions to produce comparable results as those found from physical experimentation. This project aimed to address these research gaps and answer the following broad research questions:

- How can human-technology interactions be rapidly prototyped in video game engines to support soldier interactions during virtual experiments?

- What is the level of virtual fidelity necessary to produce results comparable to corresponding physical experiments in different contexts?

- How to effectively conduct and analyze large scale multi-user virtual experiments on new enabling technologies?

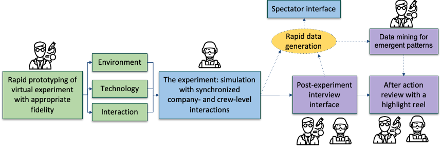

This project focused on developing a foundational platform for investigating large scale human-technology interactions via virtual experiments in video game environments. In this project, we developed an all-in-one virtual prototyping and experimentation platform that supports the rapid design, development, implementation, and analysis of human-technology interactions and experiments within video game environments. This project had the following three specific objectives:

- Rapid prototyping of human-technology interactions for virtual experimentation

- Characterizing the necessary level of fidelity for ecologically valid virtual experiments

- Conducting and analyzing virtual experiments on new enabling technologies

These objectives were addressed in this project through six sub-projects:

- Behavioral cloning of autonomous agents using low-cost heuristics (Objective 1) by creating an environment representative of GVSC-relevant scenario environments in Unreal Engine (UE) 5 and a communication framework enabling two-way communication between autonomy models and UE5. A dataset of low-dimensional expert demonstrations within the simulation environment was produced and use to train an autonomy model that can clone the expert’s behavior.

- Rapid prototyping of immersive environments to replicate real-world settings (Objective 1) with a complete workflow for 3D reconstruction and VR deployment, automated indoor/outdoor image acquisition using robot/UAV means.

- Rapid prototyping of crew stations to support manned-unmanned teaming (Objective 1 & 2) by answering the questions: a. How do varying levels of haptic fidelity impact user presence, embodiment, and system usability in gesture-based VR tasks? b. How do different haptic fidelity levels influence performance metrics such as accuracy, error rate, speed, and task completion time? c. Are continuous gestures (pan, pinch) more sensitive to differences in haptic fidelity compared to discrete gestures (tap, swipe)?

- Semi-automated data analysis and spectating during virtual experimentation (Objective 3) by developing approaches to segment individual HRT sessions into discretized trajectories for analysis, unsupervised learning techniques to identify behaviors from the aggregated dataset of discretized trajectories, and a HRT virtual experiment and collecting as well as curating a representative dataset with ground truth behavior labels.

- Scalable machine-assisted interviews of soldier experiences with combat vehicle technologies (Objective 3) by identifying and defining the verbal and nonverbal interviewer behaviors that contribute to high-quality interviews, translating these behaviors into system-level design principles, investigating how varying levels of communication richness influenced the effectiveness of the interview, and conducting a user study to compare the performance of the developed machine interviewer systems.

- Multimodal sentiment analysis during virtual experimentation (Objective 3).

2.A97, 2.A98

Publications:

Liao, Y., Lu, Z., Louie, G., Li, J., & Brudnak, M. (2025, May). Attention-Driven Adaptive Deep Canonical Correlation Analysis for Multimodal Sentiment Analysis with EEG and Eye Movement Data. In 2025 IEEE World AI IoT Congress (AIIoT) (pp. 0409-0415). IEEE.

Lu, Z., Liao, Y., & Li, J. (2025). Translation-based multimodal learning: A survey. Intelligence & Robotics, 5 (3), 783–804.

Al-Shubeilat, F., Athamnah, S., AlJundi, A. R., Brudnak, M., Wood, R., Louie, W. Y. G., & Rawashdeh, O. (2025). Evaluating the Haptic Touchscreen Experience in VR (No. 2025-01-0470). SAE Technical Paper.

Alzyout, M. S., Tikkisetty, Y. N., Alawneh, S., Brudnak, M. J., & Louie, W. Y. G. (2025, July). Automated Indoor Data Acquisition with Stretch Robot for Photogrammetry and Immersive Virtual Reality. In 2025 10th International Conference on Automation, Control and Robotics Engineering (CACRE) (pp. 107-113). IEEE.