Human-Autonomy Interaction

Annual PlanSituation awareness (SA) and Trust Repair in Multi-Agent Human-Automation Teams

Project Team

Government

Jonathon Smereka, Kayla Riegner, U.S. Army GVSC

Industry

Samantha Dubrow, MITRE Corporation

Student

Conner Esterwood, University of Michigan

Project Summary

Exploratory project #2.A94 began 2021 and was completed Q1 2023.

How can multiple teams composed of soldiers, soldier-operated vehicles, tele-operated vehicles, and autonomous vehicles effectively accomplish unit goals in dynamic environments? Answers to this question speak directly to what the Department of Defense calls “revolutionary collaboration” where soldiers are expected to view machines as valuable and critical teammates collaborating with humans within hierarchical military unit structures.

The premise of this project is that the success of MUM-T (manned-umanned teaming) depends on maintaining shared situation awareness (SA) and repair trust in a Multiteam System (MTS) consisting of both manned and unmanned agents. SA is the perception and comprehension of information that allows individuals to project future courses of action to properly respond to a dynamic environment. At the team level, SA is defined as “the degree to which every team member possesses the SA required for his or her responsibilities”. Robot teammates like human teammates make mistakes that undermine trust. Therefore, it is critical to understand how human trust in robots can be repaired.

The research objectives are:

- Identify the information needed to support SA and trust repair to avoid failure. Assess the impact of autonomy on SA, trust repair and cognitive load.•

- Identify how trust can be effectively repaired and maintained to preserve effective teaming in situations where autonomy fails.

This project uncovered that promises (explicit statements that convey future intention to improve one’s behavior) can be deployed in situations where autonomy fails and makes mistakes to restore trust in the faulty system. This is important as those systems can improve either via self-learning or via hot patches and updates. In practice then it may be a worthwhile idea to give these machines the capacity to verbally make promises moving forward when improvement is likely. This is underscored in the Human-Factors paper published as a result of this work. Importantly, the use of these repairs should only be used when errors are likely to be infrequent and not to persist as repeated promises were not effective in restoring trust, and by extension, use.

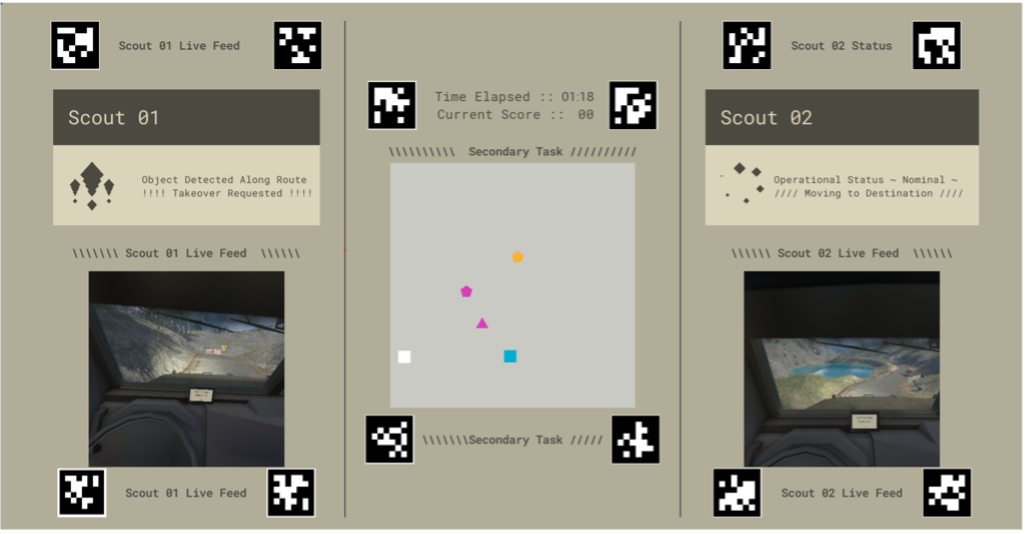

In addition to the paper detailing results of the study, this work produced methods and a simulator that has been deployed internally in other projects (2.17 and IE.01). The simulator is being developed further and is also published as an open-source software and made available to other researchers.

2.A94

Publications:

Esterwood, C., Ali, A., George, Z., Dubrow, S., Smereka, J., Riegner, K., … & Robert Jr, L. P. (2023, September). Promises and trust repair in UGVs. In Proceedings of the Human Factors and Ergonomics Society Annual Meeting (Vol. 67, No. 1, pp. 512-518). Sage CA: Los Angeles, CA: SAGE Publications.