Human-Autonomy Interaction

Annual PlanIn-the-wild Question Answering: Toward Natural Human-Autonomy Interaction

Project Team

Government

Matt Castanier, U.S. Army GVSC

Faculty

Mihai Burzo, University of Michigan

Industry

Glenn Taylor, Soar Technology, Inc.

Student

Santiago Castro, U. of Michigan

Project Summary

Project #2.15 began 2021 and was completed 2023.

Current autonomous vehicles are able to explore large and unchartered spaces in a short amount of time, however they are not able to “report back” the information they collected in a manner that is easily accessible to human users and does not produce information overload. As an example, consider the situation of a manned vehicle followed by several autonomous vehicles. In order to maintain situational awareness for the entire fleet, the human driver in the lead vehicle needs to communicate with the autonomous vehicles in ways that are similar to human-human communication.

The main research question we are addressing is how to effectively and efficiently perform natural question answering against a large visual data stream. We are specifically targeting in-the-wild question answering, where the visual data stream is a close representation of real world settings reflecting the challenges of complex and dynamic environments.

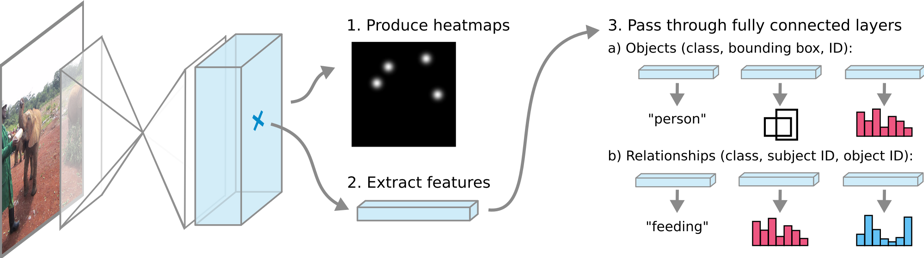

The project targets three main research objectives: (1) Construct a large dataset of video recordings paired with natural language questions that are representative for in-the-wild complex environments. This dataset will be used to both train and test in-the-wild multimodal question answering systems that can be used to enhance situational awareness. (2) Develop visual representation algorithms that convert the visual streams into semantic graphs that capture the entities in the videos as well as their relations. (3) Develop algorithms for multimodal question answering that aim to understand the type and intent of the questions, and map them against the semantic graph representations of the visual streams to identify one or more candidate answers.

Our project makes new contributions by developing novel multimodal question answering algorithms relying on semantic graph representations, and addressing real and challenging settings. Most of the algorithms that have been previously proposed for multimodal question answering have been developed and tested on data drawn from movies and TV series, which consist of acted, scripted, well-directed and heavily edited video clips that are hard to find in the real world. In contrast, our project has to overcome the challenge of environmental noise, low lighting conditions, scenes that are less defined and not perfectly framed, and lack of subject permanence.

Other Publications:

- Oana Ignat, Towards Human Action Understanding in Social Media Videos Using Multimodal Models, PhD Dissertation, August 2022.

Software:

- In-the-wild Data

https://github.com/MichiganNLP/In-the-wild-QA/tree/main/src/example_data/wildQA-data - In-the-wild Question Answering benchmark and models

https://github.com/MichiganNLP/In-the-wild-QA - Video fill-in-the-blanks question answering: benchmark and models

https://github.com/MichiganNLP/vlog_action_recognition - Scalable probing of language-vision models

https://github.com/MichiganNLP/Scalable-VLM-Probing - Graph semantic representations for action recognition

https://github.com/MichiganNLP/video-fill-in-the-blank

* The project benefits from the unfunded contributions of Oana Ignat, who is a postdoctoral fellow.

2.15

Publications:

Oana Ignat, Santiago Castro, Hanwen Miao, Weiji Li, and Rada Mihalcea. 2021. WhyAct: Identifying Action Reasons in Lifestyle Vlogs. In Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing, pages 4770–4785, Online and Punta Cana, Dominican Republic. Association for Computational Linguistics. (https://arxiv.org/abs/2109.02747)

Castro, S., Wang, R., Huang, P., Stewart, I., Ignat, O., Liu, N., … & Mihalcea, R. (2022, May). FIBER: Fill-in-the-Blanks as a Challenging Video Understanding Evaluation Framework. In Proceedings of the 60th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers) (pp. 2925-2940).

Ignat, O., Castro, S., Li, W., & Mihalcea, R. (2024, August). Learning Human Action Representations from Temporal Context in Lifestyle Vlogs. In Proceedings of TextGraphs-17: Graph-based Methods for Natural Language Processing (pp. 1-18).

Castro, S., Deng, N., Huang, P., Burzo, M., & Mihalcea, R. (2022, October). In-the-Wild Video Question Answering. In Proceedings of the 29th International Conference on Computational Linguistics (pp. 5613-5635).

Castro, S., Ignat, O., & Mihalcea, R. (2023, July). Scalable Performance Analysis for Vision-Language Models. In Proceedings of the 12th Joint Conference on Lexical and Computational Semantics (* SEM 2023) (pp. 284-294).