Vehicle Controls & Behaviors

integrativeAdaptive Trajectory Planning in Response to Partial Loss of Sensor Data

Project Team

Principal Investigator

Daniel Carruth, Mississippi State University Tulga Ersal, University of MichiganGovernment

Paramsothy Jayakumar, Michael Cole, U.S. Army GVSC

Industry

Hui Zhou, Oliver Jeromin, Rivian

Nicholas Gaul, RAMDO Solutions

Akshar Tandon, Tesla

Student

Siyuan Yu, Congkai Shen, University of Michigan

Project Summary

Project IE.02 began in 2023 and was completed June 2024.

This integration effort produced the case study “What’s a Little Dirt in the Eye? Detection and Adaptive Planning for Off-Road Autonomous Navigation under Soiled Sensors” presented at the 2024 ARC Annual Program Review.

Case Study Abstract

Reliable navigation by off-road autonomous ground vehicles faces a critical hurdle: continuing safe operation when sensor data is compromised by environmental factors such as dirt, mud, or water on sensors. This integration effort tackles this challenge by leveraging and extending two key ARC projects to combine robust perception with adaptive trajectory planning to ensure continuous and reliable autonomous operation under sensor soiling.

For Project 1.38, Mississippi State University (MSU) has uses neural networks to address partial occlusion of camera sensors by dirt or mud. The system detects the presence of occlusions, segments the occlusion from the image, diagnoses the potential effects on system functions, and attempts to fill in small occlusions. The integration effort extends the detection and segmentation to LIDAR and estimates the reduction in effective field of view for the LIDAR system.

For Project 1.A89, the University of Michigan (UM) developed trajectory planning algorithms for pushing autonomous vehicles to their limits on uncertain terrains with soft soils. The integration effort extends this work to incorporate the estimated occluded field of view of the LIDAR as input and dynamically adapts the vehicle’s trajectory to bring the occluded areas into the LIDAR’s field of view while still ensuring safe traversal of the environment.

The resulting framework has been integrated with the open-source NATO autonomy stack, based on Mississippi State University’s NATURE stack from ARC Project 1.31. Simulated and physical trials with Polaris MRZRs at both MSU and UM demonstrate significant navigational robustness improvements, ensuring reliable vehicle operation even when sensors are compromised. This synergy between perception and planning enables more resilient autonomous vehicle operation in challenging environments

This integration effort aims to enable an autonomous vehicle to continue its operation reliably under partial LiDAR data loss due to sensor soiling. To address this ultimate aim, the specific goals are:

- Characterize and simulate LiDAR data loss due to sensor soiling (1.38).

- Integrate perception modules (1.31) with adaptive trajectory planner (1.A89).

- Extend perception modules for real-time detection and quantification of LiDAR data loss.

- Extend adaptive trajectory planner to include environment uncertainty and sensor state in path planning to meet objective of reducing risk to the vehicle and bringing working sensors to bear on uncertain sections of the environment by controlling speed and heading.

- Demonstrate adaptive path planning in response to partial sensor loss in simulation and on vehicle.

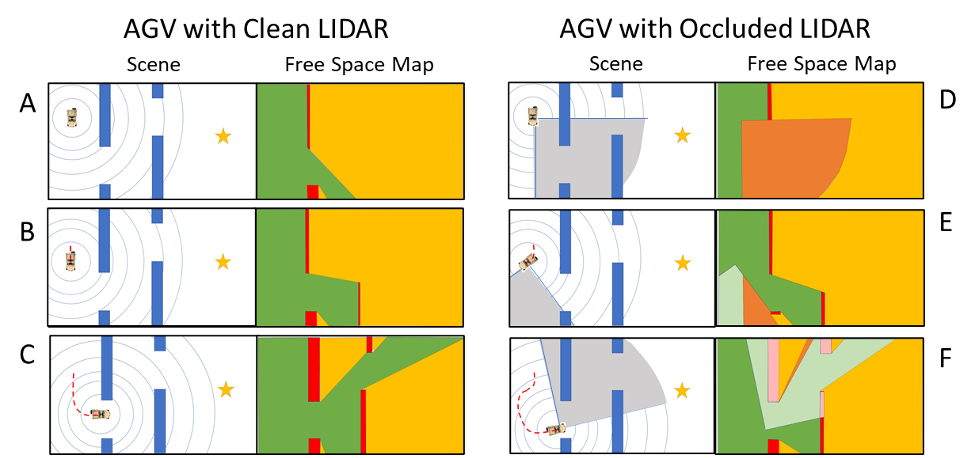

When the autonomous vehicle’s LiDAR is clean, the LiDAR immediately (A) detects free space in front of the vehicle and through the opening in the first wall. The vehicle moves from its initial position, through the free space, and to the first opening (B). As it approaches the opening (C), the LiDAR detects additional free space, the second wall, and the second opening allowing the vehicle to generate a path to complete its task. When the LiDAR has been occluded, the vehicle’s ability to map free space is impaired. The vehicle is aware that the sensor is blocked (D) and that the content of the space is unknown and unseen. Based on its knowledge of the goal position and the working arc of the LiDAR, the adaptive trajectory planner generates a local path that will steer the vehicle in such a way that the LiDAR sensor sweeps the unknown space allowing it to detect the first opening (E). The vehicle turns toward the opening, relying on its internal persistent map of the free and occupied space that was generated in real-time by the vehicle’s localization and mapping system. The vehicle passes through the first opening and detects the second opening as the LiDAR sweeps from right-to-left across the second wall (F).

IE.02